Reinforcement learning for power engineers

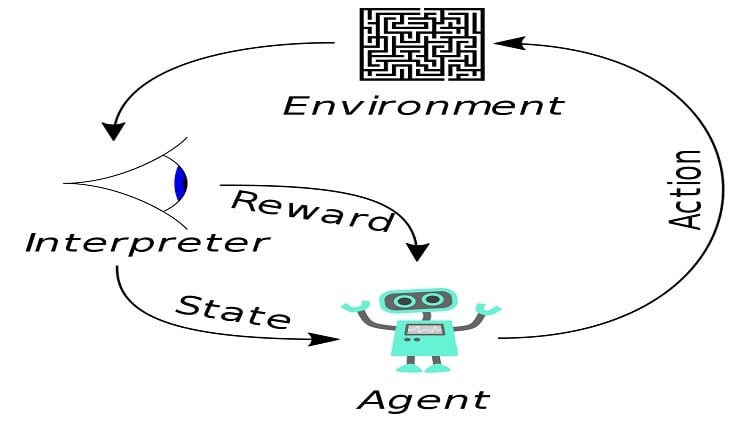

N. Mughees | October 18, 2022 The typical framing of a reinforcement learning scenario: an agent takes actions in an environment, which is interpreted into a reward and a representation of the state, which are fed back into the agent. Source: Megajuice/CCO 1.0

The typical framing of a reinforcement learning scenario: an agent takes actions in an environment, which is interpreted into a reward and a representation of the state, which are fed back into the agent. Source: Megajuice/CCO 1.0

Power and energy engineers are responsible for the development, installation and maintenance of electric power generation, transmission and distribution services. While current power systems represent dynamic networks of electrical components that have evolved over centuries, financial, technical, environmental and governmental pressures have compelled traditional power grids to evolve into more complicated, resilient, productive and sustainable smart grids during this period. Smart grids enable bidirectional energy and information flows between consumers, producers, transmission and distribution system operators, and demand response aggregators but such factors have wreaked havoc on the power system in a variety of ways.

A high proportion of renewable energy sources, such as solar and wind, increases the uncertainty in a power system. Additionally, the deregulation of the energy market and the active participation of consumers complicate the task of integrating distributed energy resources. To reshape and resolve these issues, effective grid planning and operation methods are needed. Moreover, grid transformation is leading to a rise in complexity and uncertainty in both business dealings and physical electricity flows. Thus, future smart grids will require a system capable of monitoring, forecasting, scheduling, learning and making real-time decisions about power consumption and production. Thereby, more intelligent and efficient methods, such as reinforcement learning, are required.

Introduction to reinforcement learning

Reinforcement learning (or deep reinforcement learning) is a machine learning technique that is derived from neutral stimulus and response pairs. It has grown in popularity due to its success in resolving difficult sequential decision-making problems. Numerous power system problems can be reduced to sequential decision-making tasks. Traditionally, methods such as convex optimization, programming and heuristics were being used to solve these problems. However, reinforcement learning offers several advantages over each of these routes.

In comparison with traditional convex optimization methods, reinforcement learning does not require an exact objective function. It instead evaluates decision-making behavior through the lens of the reward function. Additionally, deep reinforcement learning can handle data with a higher dimension than convex optimization methods. In contrast to programming methods, reinforcement learning makes decisions based on the current state, making them real-time and online. Finally, in comparison to heuristic methods, reinforcement learning or its deep version is more robust, achieving stable convergence, and is therefore preferable for decision-making problems.

The technology

Reinforcement learning is used to determine a behavioral policy or strategy that maximizes a specified criterion of satisfaction. In the meantime, a cumulative sum of rewards is obtained over time by interacting with a particular environment through trial and error. To accomplish these tasks, a reinforcement learning system is composed of a decision-maker, referred to as the agent, operating in a state-based environment model. The agent is able to perform certain actions depending on its current state. After selecting an action at a certain time, the agent gets a scalar reward and is transported to a new state that is dependent on the present state and the selected action.

Markov decision processes are a fundamental formalism of reinforcement learning because they satisfy the Markov property. The Markov property implies that the process's future is determined solely by its current state, and the agent seems to have no interest in the process's complete history. At every epoch, the agent performs an action that modifies its environment's state and gives a reward. To further handle the reward function, optimal policy and value functions are made.

Toward deep reinforcement learning

The transition from reinforcement learning to deep reinforcement learning has opened new doors for the power system. In traditional tabular reinforcement learning, for example, Q-learning, the state and action spaces are sufficiently small to allow for the representation of approximate value functions as arrays or tables. Therefore, in such circumstances, the approaches frequently identify the precise optimal value functions and policies. Nevertheless, when it comes to real-world implementation, these previous methods face a difficult design issue. To address this issue, estimated value functions are expressed in the form of parameterized equations with a weight vector (similar to deep neural networks) rather than a table. Deep reinforcement learning is capable of completing complex tasks with little prior knowledge thanks to its capability to learn various levels of abstraction from data.

[Discover more about engineering software on GlobalSpec.com]

Deep reinforcement learning is popularly being used by power engineers to solve optimization and control problems in power systems, for example, demand-response programs, energy management in microgrids and operational control. It can develop optimal control strategies for minimizing energy prices even without knowledge of energy price and load demand in real-time, and can increase the rate of renewable energy utilization and help control household appliance usage. Additionally, it can plan storage scheduling strategies and adapt to changing electricity prices in real-time for industrial or domestic customers.

Conclusion

With the penetration of renewable energy and the strengthening of deregulation and privatization, the power system faces new issues that must be addressed by smart grid research and development. Fortunately, reinforcement learning or its combination with deep learning is here to deal with the challenges being faced by traditional methods when solving issues in the changing power system.