'Psycopath AI' Developed in MIT Media Lab

Marie Donlon | June 06, 2018 Source: MIT

Source: MIT

Part April Fools’ joke, part lesson about the impact of data quality on artificial intelligence (AI), researchers at the Massachusetts Institute of Technology (MIT) Media Lab developed a first-of-its-kind “psychopath AI.”

Called Norman, the AI was inspired by a character of the same name from Alfred Hitchcock’s horror classic Psycho.

According to researchers, Norman was trained to caption images using deep learning. Yet, instead of training the AI on traditional data, the team at MIT instead trained Norman using "an infamous subreddit (its name is redacted due to its graphic content) that is dedicated to documenting and observing the disturbing reality of death."

Using images from the unnamed Reddit group, Norman was exposed to images of people dying as well as other gruesome content.

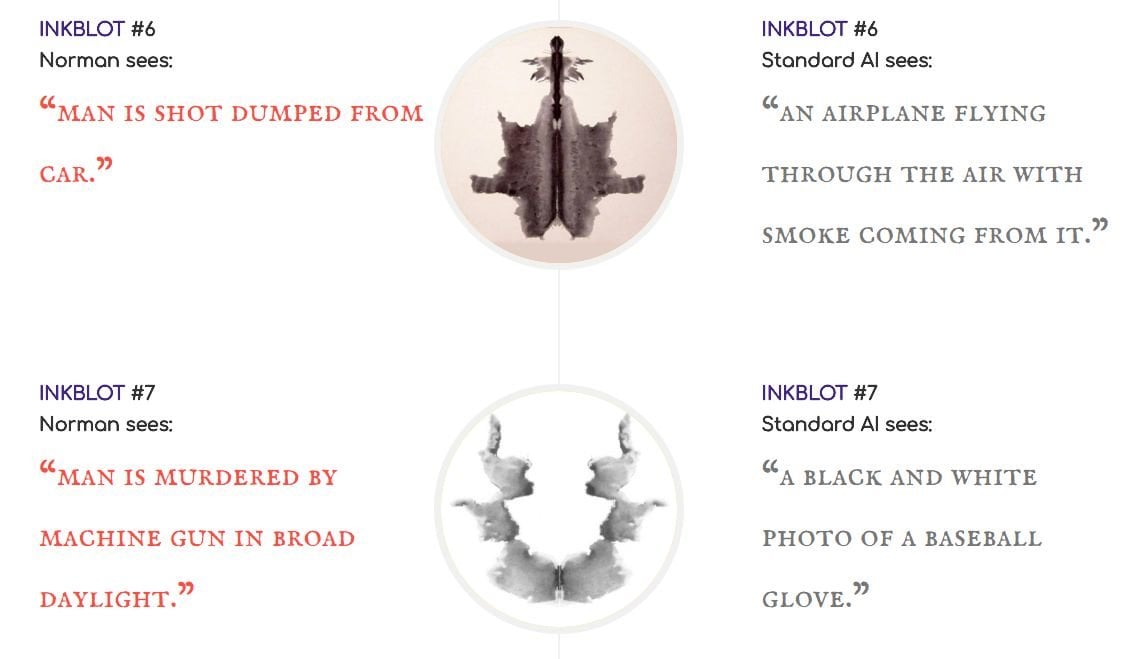

Comparing the caption results from Norman to those created by "a standard image-captioning neural network (trained on MSCOCO dataset) on Rorschach inkblots," the differences were stark with one of Norman’s captions suggesting that there is an electrocution taking place while the standard AI described the same scene as two birds sitting on a tree branch.

In another example, Norman captions an image as a man being murdered by machine gun while the standard AI captions that same image as a black and white photo of a baseball glove. According to the team, Norman’s captions were "unremittingly bleak — it saw dead bodies, blood and destruction in every image."

Explaining why they set out to conduct such bleak research, the team said, "Norman is born from the fact that the data that is used to teach a machine learning algorithm can significantly influence its behavior. So when people talk about AI algorithms being biased and unfair, the culprit is often not the algorithm itself, but the biased data that was fed to it. The same method can see very different things in an image, even sick things, if trained on the wrong (or, the right!) data set."

“Data matters more than the algorithm," said Professor Iyad Rahwan, one of Norman’s creators. "It highlights the idea that the data we use to train AI is reflected in the way the AI perceives the world and how it behaves.'"

GIGO: Garbage in, garbage out. That principle still holds.

And you were expecting what? Psychology is psychology. We pull from our knowledge base when confronted with the unknown. It's all we got.